在TensorflowServing中部署预训练的Inception失败:SavedModel没有变量

说明

我正在尝试在TF服务中部署pre-trained TensorFlow object detection models(faster_rcnn_inception_v2_coco_2018_01_28)中的一个。我按照以下步骤操作:

- 克隆https://github.com/tensorflow/models

- 下载经过训练的Inception model checkpoint

- 使用此命令如here中所述导出起始。请注意,我正在将

input_type更改为encoded_image_string_tensor,这样就无需在API中附加输入张量来反序列化输入字符串。 - 使用以下代码将上一步的起始转换为可食用的内容

- 运行TF服务,将

model_base_path指向上一步创建的文件夹。

问题

在查询API predict端点时,它失败了,看来该模型尚未正确初始化:

{ "error": "Attempting to use uninitialized value SecondStageFeatureExtractor/InceptionV2/Mixed_5c/Branch_3/Conv2d_0b_1x1/BatchNorm/moving_mean\\n\\t [[Node: SecondStageFeatureExtractor/InceptionV2/Mixed_5c/Branch_3/Conv2d_0b_1x1/BatchNorm/moving_mean/read = Identity[T=DT_FLOAT, _output_shapes=[[128]], _device=\\"/job:localhost/replica:0/task:0/device:CPU:0\\"](SecondStageFeatureExtractor/InceptionV2/Mixed_5c/Branch_3/Conv2d_0b_1x1/BatchNorm/moving_mean)]]" }

确实向TF服务日志发出了The specified SavedModel has no variables; no checkpoints were restored.警告(请参阅附录,了解第5步)。

在模型导出阶段我缺少什么?从较旧的问题(this和this)来看,我认为这可能是由于步骤(3)将变量作为常量冻结到保存的模型中。是这样吗?

步骤3的附录-重新导出开始

python object_detection/export_inference_graph.py \

--input_type encoded_image_string_tensor \

--pipeline_config_path ${MODEL_DIR}/pipeline.config \

--trained_checkpoint_prefix ${MODEL_DIR}/model.ckpt \

--inference_graph_path ${MODEL_DIR} \

--export_as_saved_model=True \

--write_inference_graph=True \

--output_directory ${OUTPUT_DIR}

此命令生成此模型

MetaGraphDef with tag-set: 'serve' contains the following SignatureDefs:

signature_def['serving_default']:

The given SavedModel SignatureDef contains the following input(s):

inputs['inputs'] tensor_info:

dtype: DT_STRING

shape: (-1)

name: encoded_image_string_tensor:0

The given SavedModel SignatureDef contains the following output(s):

outputs['detection_boxes'] tensor_info:

dtype: DT_FLOAT

shape: (-1, 100, 4)

name: detection_boxes:0

outputs['detection_classes'] tensor_info:

dtype: DT_FLOAT

shape: (-1, 100)

name: detection_classes:0

outputs['detection_scores'] tensor_info:

dtype: DT_FLOAT

shape: (-1, 100)

name: detection_scores:0

outputs['num_detections'] tensor_info:

dtype: DT_FLOAT

shape: (-1)

name: num_detections:0

Method name is: tensorflow/serving/predict

步骤4的附录-将模型保存为可使用的

import os

import shutil

import tensorflow as tf

tf.app.flags.DEFINE_string('checkpoint_dir', '/tmp/inception_train',

"""Directory where to read training checkpoints.""")

tf.app.flags.DEFINE_string('output_dir', '/tmp/faster_rcnn_inception_v2_coco_2018_01_28-export/',

"""Directory where to export inference model.""")

tf.app.flags.DEFINE_integer('model_version', 1,

"""Version number of the model.""")

tf.app.flags.DEFINE_string('summaries_dir', '/tmp/tensorboard_data',

"""Directory where to store tensorboard data.""")

FLAGS = tf.app.flags.FLAGS

def main(_):

with tf.Graph().as_default() as graph:

saver = tf.train.import_meta_graph(meta_graph_or_file=os.path.join(FLAGS.checkpoint_dir, 'model.ckpt.meta'))

with tf.Session(graph=graph) as sess:

saver.restore(sess, tf.train.latest_checkpoint(FLAGS.checkpoint_dir))

# (re-)create export directory

export_path = os.path.join(

tf.compat.as_bytes(FLAGS.output_dir),

tf.compat.as_bytes(str(FLAGS.model_version)))

if os.path.exists(export_path):

shutil.rmtree(export_path)

tf.global_variables_initializer().run()

tf.local_variables_initializer().run()

print("tf.global_variables()")

print(sess.run(tf.global_variables()))

print("tf.local_variables()")

print(sess.run(tf.local_variables()))

# create model builder

builder = tf.saved_model.builder.SavedModelBuilder(export_path)

# Build the signature_def_map.

predict_inputs_tensor_info = tf.saved_model.utils.build_tensor_info(graph.get_tensor_by_name('encoded_image_string_tensor:0'))

boxes_output_tensor_info = tf.saved_model.utils.build_tensor_info(graph.get_tensor_by_name('detection_boxes:0'))

prediction_signature = (

tf.saved_model.signature_def_utils.build_signature_def(

inputs={

'images': predict_inputs_tensor_info

},

outputs={

'classes': boxes_output_tensor_info

},

method_name=tf.saved_model.signature_constants.

PREDICT_METHOD_NAME

)

)

builder.add_meta_graph_and_variables(sess,

[tf.saved_model.tag_constants.SERVING],

signature_def_map={

tf.saved_model.signature_constants.

DEFAULT_SERVING_SIGNATURE_DEF_KEY:

prediction_signature,

},

legacy_init_op=None)

builder.save(as_text=False)

merged = tf.summary.merge_all()

train_writer = tf.summary.FileWriter(FLAGS.summaries_dir + '/' + str(FLAGS.model_version),

sess.graph)

print("Successfully exported Faster RCNN Inception model version '{}' into '{}'".format(

FLAGS.model_version, FLAGS.output_dir))

if __name__ == '__main__':

tf.app.run()

这将产生以下可食用内容:

MetaGraphDef with tag-set: 'serve' contains the following SignatureDefs:

signature_def['serving_default']:

The given SavedModel SignatureDef contains the following input(s):

inputs['images'] tensor_info:

dtype: DT_STRING

shape: (-1)

name: encoded_image_string_tensor:0

The given SavedModel SignatureDef contains the following output(s):

outputs['classes'] tensor_info:

dtype: DT_FLOAT

shape: unknown_rank

name: detection_boxes:0

Method name is: tensorflow/serving/predict

步骤5的附录-用于部署可服务对象的docker-compose日志

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.191941: I tensorflow_serving/model_servers/main.cc:153] Building single TensorFlow model file config: model_name: faster_rcnn_inception model_base_path: /tmp/faster_rcnn_inception_v2_coco_2018_01_28_string_input_version-export/

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.192341: I tensorflow_serving/model_servers/server_core.cc:459] Adding/updating models.

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.192465: I tensorflow_serving/model_servers/server_core.cc:514] (Re-)adding model: faster_rcnn_inception

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.195056: I tensorflow_serving/core/basic_manager.cc:716] Successfully reserved resources to load servable {name: faster_rcnn_inception version: 1}

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.195241: I tensorflow_serving/core/loader_harness.cc:66] Approving load for servable version {name: faster_rcnn_inception version: 1}

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.195404: I tensorflow_serving/core/loader_harness.cc:74] Loading servable version {name: faster_rcnn_inception version: 1}

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.195652: I external/org_tensorflow/tensorflow/contrib/session_bundle/bundle_shim.cc:360] Attempting to load native SavedModelBundle in bundle-shim from: /tmp/faster_rcnn_inception_v2_coco_2018_01_28_string_input_version-export/1

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.195829: I external/org_tensorflow/tensorflow/cc/saved_model/loader.cc:242] Loading SavedModel with tags: { serve }; from: /tmp/faster_rcnn_inception_v2_coco_2018_01_28_string_input_version-export/1

tf-serving-base exited with code 0

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.313633: I external/org_tensorflow/tensorflow/core/platform/cpu_feature_guard.cc:141] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2 FMA

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.492904: I external/org_tensorflow/tensorflow/cc/saved_model/loader.cc:161] Restoring SavedModel bundle.

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.493224: I external/org_tensorflow/tensorflow/cc/saved_model/loader.cc:171] The specified SavedModel has no variables; no checkpoints were restored.

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.493329: I external/org_tensorflow/tensorflow/cc/saved_model/loader.cc:196] Running LegacyInitOp on SavedModel bundle.

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.512745: I external/org_tensorflow/tensorflow/cc/saved_model/loader.cc:291] SavedModel load for tags { serve }; Status: success. Took 316614 microseconds.

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.513085: I tensorflow_serving/servables/tensorflow/saved_model_warmup.cc:83] No warmup data file found at /tmp/faster_rcnn_inception_v2_coco_2018_01_28_string_input_version-export/1/assets.extra/tf_serving_warmup_requests

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.513927: I tensorflow_serving/core/loader_harness.cc:86] Successfully loaded servable version {name: faster_rcnn_inception version: 1}

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.516875: I tensorflow_serving/model_servers/main.cc:323] Running ModelServer at 0.0.0.0:8500 ...

tf-serving-faster_rcnn_inception | [warn] getaddrinfo: address family for nodename not supported

tf-serving-faster_rcnn_inception | 2018-08-16 16:05:28.518018: I tensorflow_serving/model_servers/main.cc:333] Exporting HTTP/REST API at:localhost:8501 ...

tf-serving-faster_rcnn_inception | [evhttp_server.cc : 235] RAW: Entering the event loop ...

注意:

- python 2.7 步骤(3),(4)和(5)中的

- tensorflow 1.10.0。通过pip安装。

1 个答案:

答案 0 :(得分:1)

从我的答案here

中复制此内容我也为此付出了很多努力,并且能够通过为当前OD API代码版本(截至2019年7月2日)更新@lionel92's suggestion来导出模型(包括变量文件)。它主要涉及更改write_saved_model中的models/research/object_detection/exporter.py函数

更新write_saved_model中的exporter.py

def write_saved_model(saved_model_path,

trained_checkpoint_prefix,

inputs,

outputs):

saver = tf.train.Saver()

with tf.Session() as sess:

saver.restore(sess, trained_checkpoint_prefix)

builder = tf.saved_model.builder.SavedModelBuilder(saved_model_path)

tensor_info_inputs = {

'inputs': tf.saved_model.utils.build_tensor_info(inputs)}

tensor_info_outputs = {}

for k, v in outputs.items():

tensor_info_outputs[k] = tf.saved_model.utils.build_tensor_info(v)

detection_signature = (

tf.saved_model.signature_def_utils.build_signature_def(

inputs=tensor_info_inputs,

outputs=tensor_info_outputs,

method_name=tf.saved_model.signature_constants.PREDICT_METHOD_NAME

))

builder.add_meta_graph_and_variables(

sess,

[tf.saved_model.tag_constants.SERVING],

signature_def_map={

tf.saved_model.signature_constants

.DEFAULT_SERVING_SIGNATURE_DEF_KEY:

detection_signature,

},

)

builder.save()

更新_export_inference_graph中的exporter.py

然后,在_export_inference_graph函数中,更新最后一行以传递检查点前缀,例如:

write_saved_model(saved_model_path, trained_checkpoint_prefix,

placeholder_tensor, outputs)

调用导出脚本

正常拨打models/research/object_detection/export_inference_graph.py。对我来说,这看起来像这样:

INPUT_TYPE=encoded_image_string_tensor

PIPELINE_CONFIG_PATH=/path/to/model.config

TRAINED_CKPT_PREFIX=/path/to/model.ckpt-50000

EXPORT_DIR=/path/to/export/dir/001/

python $BUILDS_DIR/models/research/object_detection/export_inference_graph.py \

--input_type=${INPUT_TYPE} \

--pipeline_config_path=${PIPELINE_CONFIG_PATH} \

--trained_checkpoint_prefix=${TRAINED_CKPT_PREFIX} \

--output_directory=${EXPORT_DIR}

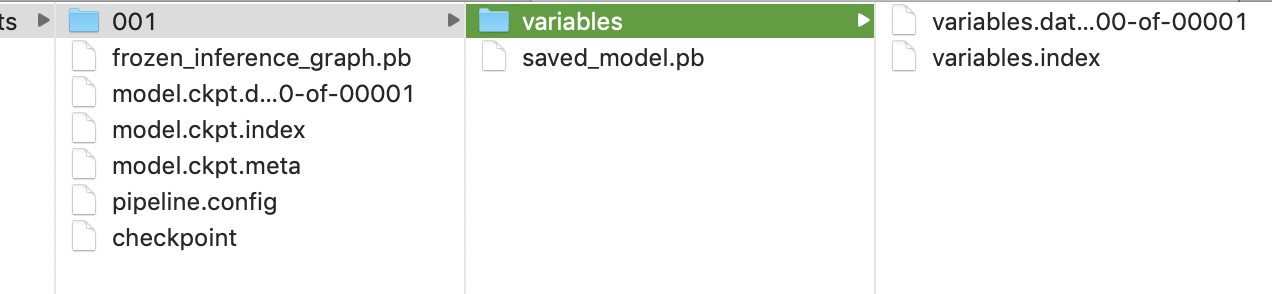

如果可行,您应该会看到这样的目录结构。准备将其放入TF Serving Docker映像中以进行扩展推理。

相关问题

最新问题

- 我写了这段代码,但我无法理解我的错误

- 我无法从一个代码实例的列表中删除 None 值,但我可以在另一个实例中。为什么它适用于一个细分市场而不适用于另一个细分市场?

- 是否有可能使 loadstring 不可能等于打印?卢阿

- java中的random.expovariate()

- Appscript 通过会议在 Google 日历中发送电子邮件和创建活动

- 为什么我的 Onclick 箭头功能在 React 中不起作用?

- 在此代码中是否有使用“this”的替代方法?

- 在 SQL Server 和 PostgreSQL 上查询,我如何从第一个表获得第二个表的可视化

- 每千个数字得到

- 更新了城市边界 KML 文件的来源?